AI Training in Healthcare: How AI Data Scraping Could Violate HIPAA and Patient Rights

Your health data could be used to train AI without your consent — we can help protect it.

AI is learning from real patient data, and your medical privacy might be at risk.

Every day, hospitals and tech companies collect mountains of medical information. Your lab results, prescription history, and even notes from doctors’ sessions could be quietly scraped and fed into Artificial Intelligence systems designed to “learn” from real patient data.

While AI promises breakthroughs in healthcare, this practice, known as data scraping, can put your most private health information at risk and may violate HIPAA protections.

You don’t have to stand by while your sensitive data is used without your permission. At The Lyon Firm, we fight for patients whose medical records have been misused. Call us at (513) 381-2333 or fill out our confidential online form to discuss your case and protect your rights.

“Really great firm! They were very responsive and respectful. I would highly recommend them!”

– Elizabeth W. | Client

Healthcare Data Scraping Lawsuit: How We Protect Patients

Filing a lawsuit against a major hospital system or powerful technology company can feel intimidating. At The Lyon Firm, our mission is simple: to stand up to corporate misconduct and restore your right to medical privacy.

When you work with our firm, you gain a team that has spent nearly 20 years challenging some of the largest healthcare systems and tech companies in the country.

If your health information was collected or shared without your permission, our attorneys can help by:

- Conducting a Comprehensive Investigation: We begin by tracing exactly where your information went and how it was used. Our team reviews vendor agreements, data-sharing practices, and AI development contracts to uncover how the data scraping occurred and determine the full scope of the misuse.

- Proving the Privacy Violation: We build a detailed case demonstrating how the unauthorized use of your information violates federal and state privacy laws, including HIPAA.

- Pursuing Compensation on Your Behalf: Our legal team aims to recover damages for emotional distress, invasion of privacy, and any financial or reputational harm you have suffered.

- Driving Lasting Change: Beyond compensation, our cases often result in meaningful reform. We hold organizations accountable and push them to strengthen data security and privacy protections, helping ensure future patients are not put at risk.

Understanding AI Model Training in Healthcare

Artificial Intelligence (AI) is transforming modern medicine. From detecting cancer earlier to helping doctors personalize treatments and accelerate drug research, AI promises to make healthcare faster, smarter, and more precise.

To achieve these advancements, AI systems rely on a critical resource: data. This process requires vast amounts of patient information so that algorithms can “learn” to recognize medical patterns and make accurate predictions. For example, to teach an AI system how to identify a tumor, developers must provide millions of images of both healthy and unhealthy tissues.

Much of this training data comes from electronic health records (EHRs), which may include a person’s diagnoses, prescriptions, laboratory results, medical images, and even doctor’s notes. Health and wellness apps also collect sensitive data, such as nutrition and activity information, to power consumer-facing AI tools.

In each of these cases, AI models depend on machine learning algorithms that analyze enormous amounts of data to improve care and decision-making. These systems can identify patients at risk for complications, detect disease earlier, and even help hospitals operate more efficiently.

CONTACT THE LYON FIRM TODAY

Please complete the form below for a FREE consultation.

ABOUT THE LYON FIRM

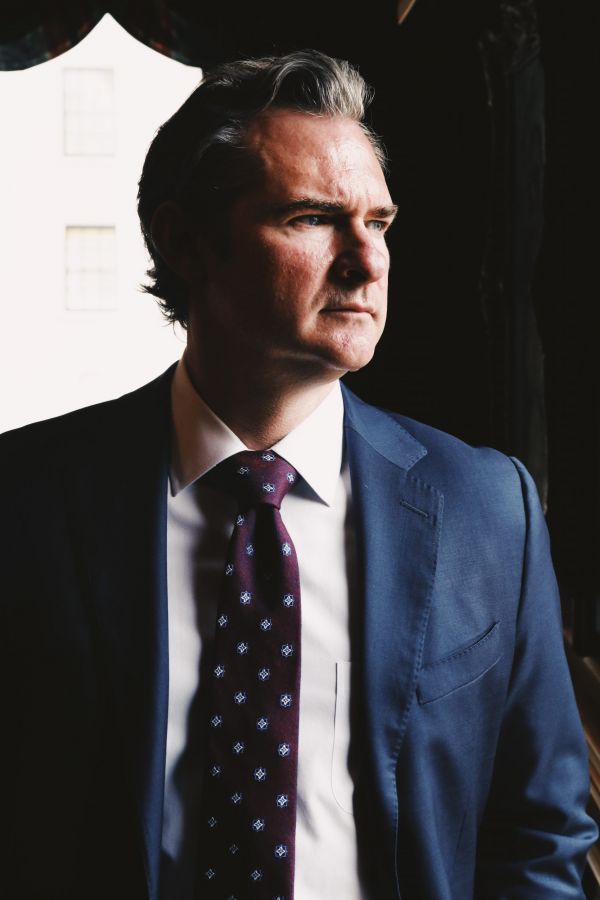

Joseph Lyon has 17 years of experience representing individuals in complex litigation matters. He has represented individuals in every state against many of the largest companies in the world.

The Firm focuses on single-event civil cases and class actions involving corporate neglect & fraud, toxic exposure, product defects & recalls, medical malpractice, and invasion of privacy.

NO COST UNLESS WE WIN

The Firm offers contingency fees, advancing all costs of the litigation, and accepting the full financial risk, allowing our clients full access to the legal system while reducing the financial stress while they focus on their healthcare and financial needs.

What Is Data Scraping?

Data scraping is the automated collection of large amounts of information from websites, databases, or electronic systems. Specialized software can rapidly scan and extract data without the usual safeguards or consent that protect personal information.

While data scraping once focused on public platforms such as social media or retail sites, it has now entered one of the most sensitive spaces imaginable: healthcare.

When automated data collection targets hospitals, research databases, or health-related applications, the risks increase dramatically. Healthcare data is among the most personal information a person can have, and using it without permission can violate privacy laws, ethical standards, and basic patient trust.

The Dangers of Data Scraping

Patient information used in AI systems may be protected under the Health Insurance Portability and Accountability Act (HIPAA) and other privacy laws. Even when data is “deidentified,” there is still a risk that it could become identifiable again once combined with other information or processed by machine-learning algorithms.

This means that what appears to be anonymous medical data could, under certain conditions, reveal identifiable health information, placing patients’ privacy at risk and exposing healthcare organizations to potential legal consequences.

Some organizations and technology developers use scraping tools to obtain data faster for AI training in healthcare projects. Rather than following strict legal processes to obtain properly authorized data, they may try to access or repurpose information from other systems. This practice can expose patient details that should remain confidential.

As AI continues to reshape the healthcare industry, the balance between innovation and privacy has never been more important. You deserve to know that your medical history is safe and your privacy is respected.

At The Lyon Firm, we stand on the front lines of data privacy litigation. If you believe your health information was collected or shared without consent for AI training, you don’t have to face it alone. Call (513) 381-2333 or fill out our private online form for a free, confidential consultation.

Your Data at Risk

HIPAA created a term called Protected Health Information, or PHI. PHI is anything that connects your health information directly to you. It is the core of your medical identity.

What exactly is at risk when healthcare data is used without permission? Nearly every detail of your health life:

- Medical Records and Diagnoses: Everything from a routine blood test result to a diagnosis of a chronic or sensitive condition.

- Mental Health Notes: The most personal records, including therapy notes, psychiatric evaluations, and medication history.

- Billing and Insurance Claims: Information about procedures you’ve had, which can reveal sensitive health issues.

- Genetic and Biometric Data: Your DNA test results, fingerprints, or other unique body identifiers.

HIPAA and AI In Healthcare: When Protection Fails

The entire reason we have HIPAA is to keep your medical information private and to ensure that only authorized people can use it for specific reasons (like treatment, payment, or hospital operations).

The law is clear: a healthcare provider generally cannot share your identifiable information with a third party (like an AI developer) unless they have consent. Using data for unauthorized model training, or sharing poorly de-identified data that can be traced back to you, is a direct violation.

The Consequences for Patients

A HIPAA violation isn’t just a legal slip-up; it has real, serious consequences for you, the patient:

- Identity Theft and Fraud: Your health information can be used by criminals to file false insurance claims or commit medical fraud.

- Discrimination and Stigma: If an employer or insurance company gets hold of sensitive details about your mental health or serious medical conditions, you could face unfair discrimination in the future.

- Financial Harm: You might have to pay out-of-pocket costs to fix errors caused by the data breach or pay for credit monitoring services for years to come.

- Loss of Trust: Most importantly, you lose the confidence that your most personal information is safe, making you less willing to seek future care.

When these violations occur, the resulting healthcare data scraping lawsuit seeks to compensate patients for these very real harms and prevent the abuse from happening again.

In a recent lawsuit, Reddit accused Perplexity, a $20 billion AI company, of illegally scraping Reddit content after being explicitly told not to. Reddit even set a “digital trap” to catch the company in the act, confirming that Perplexity had bypassed protective barriers to collect data it had no right to use.

This high-profile case highlights a growing concern in the AI industry: companies taking data without consent to power their technology. While Reddit’s fight involves online content, the same unlawful tactics can endanger medical and personal health data used in AI training in healthcare.

Why Hire The Lyon Firm

As hospitals and technology companies race to build smarter systems, too many have ignored their legal and ethical obligations, allowing unauthorized access to patient information to become routine. Your health history is not a business asset.

If your private health information has been exposed, shared, or used to train an AI model without your consent, The Lyon Firm is here to help.

Our attorneys have led some of the nation’s most significant privacy and healthcare data cases, standing up to major hospital networks and tech corporations to secure justice for individuals and families. We combine decades of courtroom experience with a client-focused approach, because we know these cases are about more than data; they are about people.

Call (513) 381-2333 or reach out online for a free, confidential consultation. We fight to ensure your privacy and your trust in healthcare are fully restored.

CONTACT THE LYON FIRM TODAY

Please complete the form below for a FREE consultation.

Healthcare Data Scraping Lawsuit

The time limit for filing a lawsuit varies significantly by state and depends on the specific law that was violated (HIPAA violation, breach of contract, negligence, etc.). These deadlines can be as short as one or two years. Because of this complexity and the constant changes in the law around AI, it is critical to consult with an attorney as soon as you suspect your data may have been involved in a breach or unauthorized use.

Patients can take important steps to safeguard their personal health information. Always read privacy notices from your doctors, hospitals, and health apps to see how your data may be shared or used for “research” or “service improvement.” Ask your providers how their AI tools are trained and whether outside companies can access your information. You also have rights under HIPAA to review, correct, and track disclosures of your medical records—use those rights to stay informed and protect your privacy.

While HIPAA governs how healthcare providers and insurers handle patient information, the Federal Trade Commission (FTC) also plays a key role in protecting your health privacy, especially as AI becomes more common. The FTC enforces consumer protection laws and can take action against companies, such as fitness apps or health-tracking websites, that misuse or share personal health data without consent.

Not always directly, but they are often covered by other laws enforced by the FTC. HIPAA only applies to “Covered Entities” (like hospitals, doctors, and insurers). Most standalone mental health apps, fitness trackers, and symptom checkers are not Covered Entities. However, if they share your private data with third parties (especially for advertising or AI training) without clear consent, the FTC can, and has, taken legal action against them for deceptive practices and violating consumer privacy.

-

-

Answer a few general questions.

-

A member of our legal team will review your case.

-

We will determine, together with you, what makes sense for the next step for you and your family to take.

-